LANDSONG is an audio-visual art project by artist and The Light Surgeons creative director Christopher Thomas Allen that has evolved through his involvement in the Living In Changing Landscapes Research residency in 2024 in the East of England.

Allen was invited by Norfolk-based arts producers Collusion, in partnership with Norwich University of the Arts and the Broads Authority, to participate in a 6-month research and development project to explore the subject of the climate crisis in relation to the changing landscapes of East Anglia. He set out to research and develop an ambitious co-created immersive audio-visual artwork that could bring together intergenerational voices from this region to reflect on collective challenges of climate adaptation. The project aims to develop new knowledge, raise awareness, and inspire action around climate change’s impact on local communities in East Anglia and beyond.

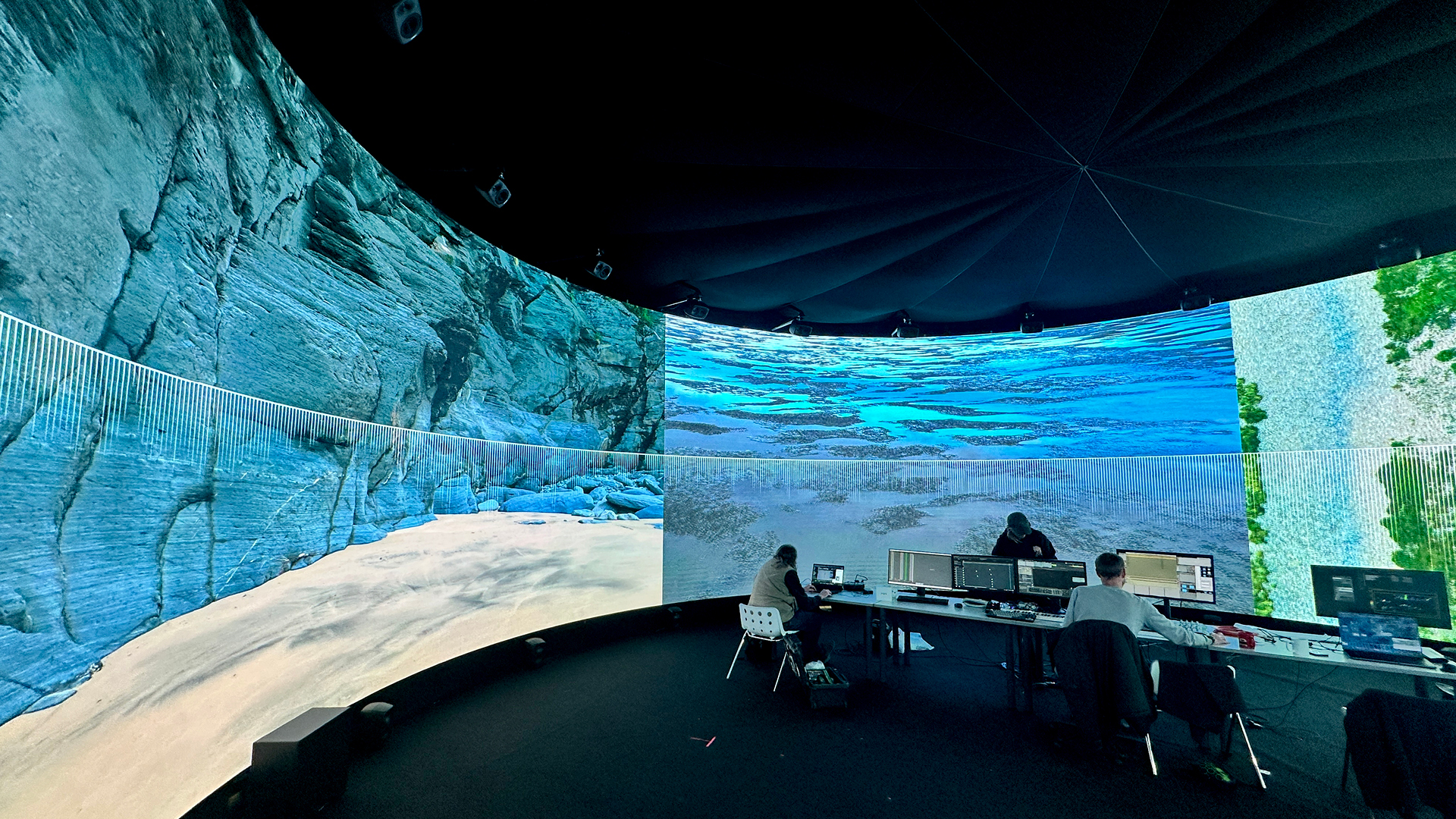

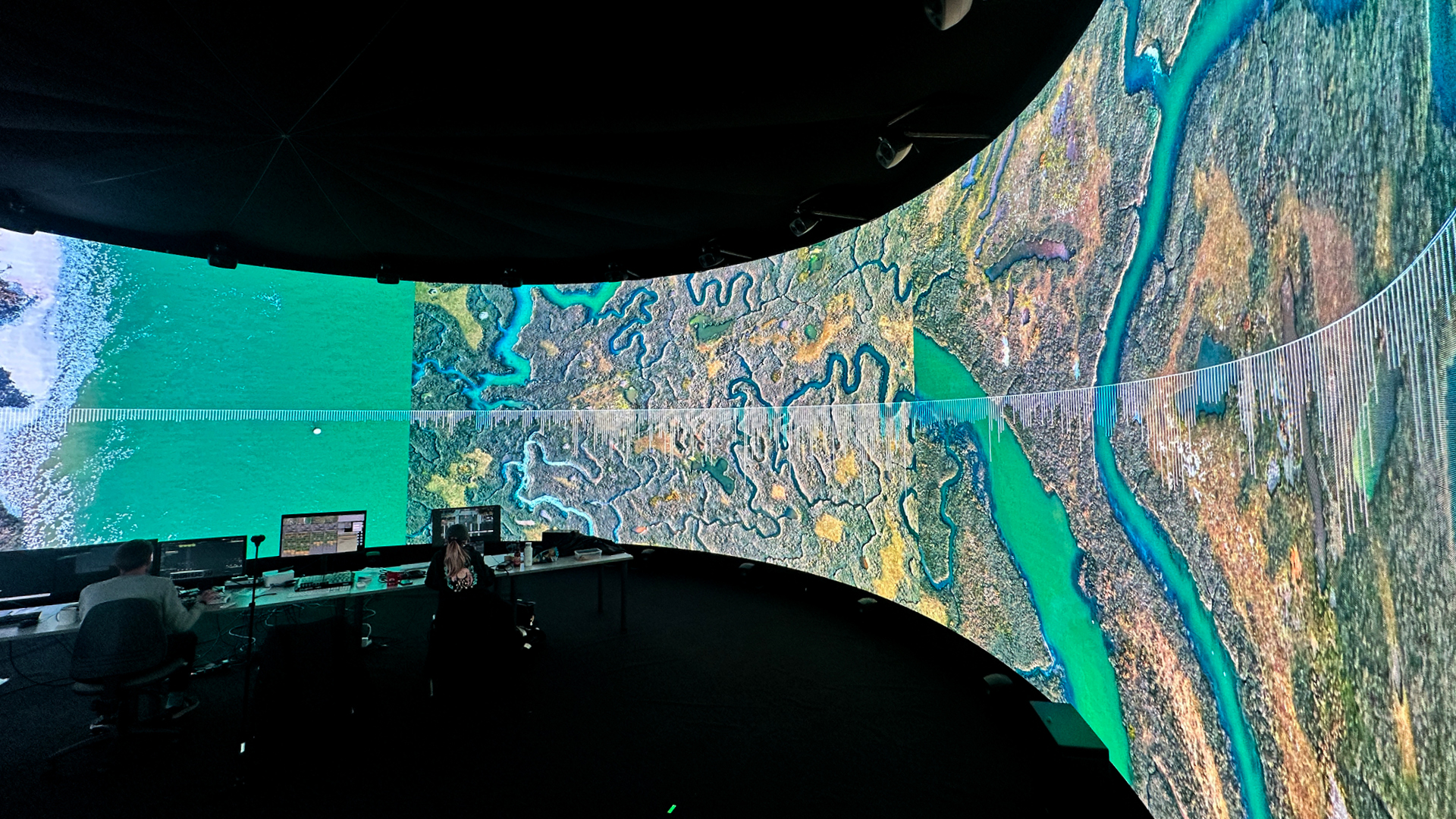

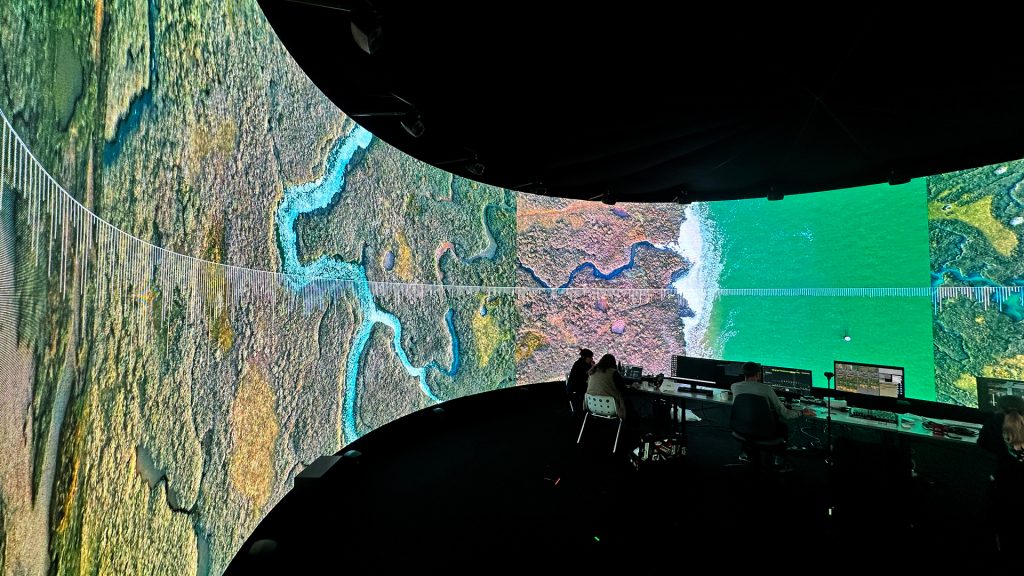

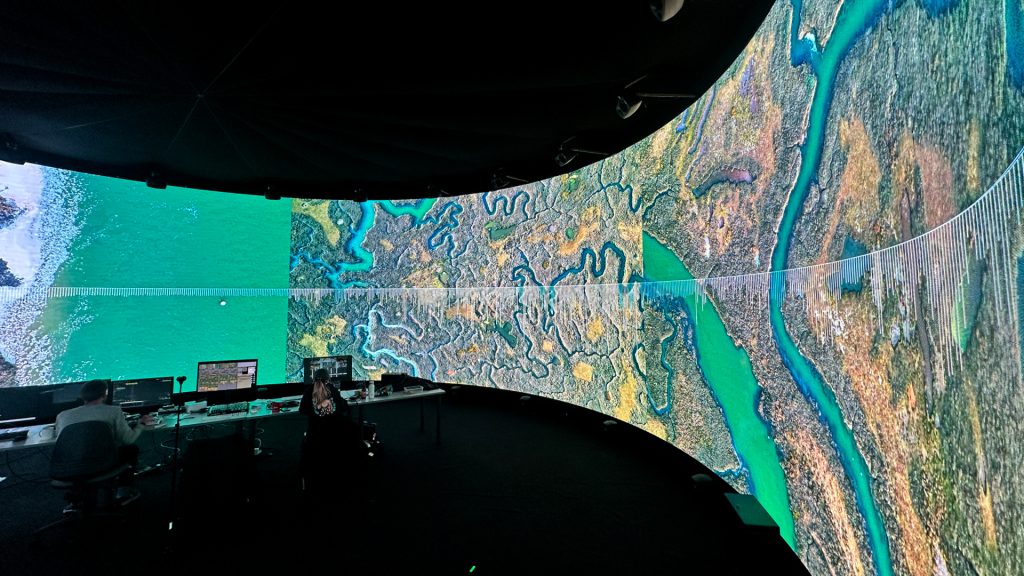

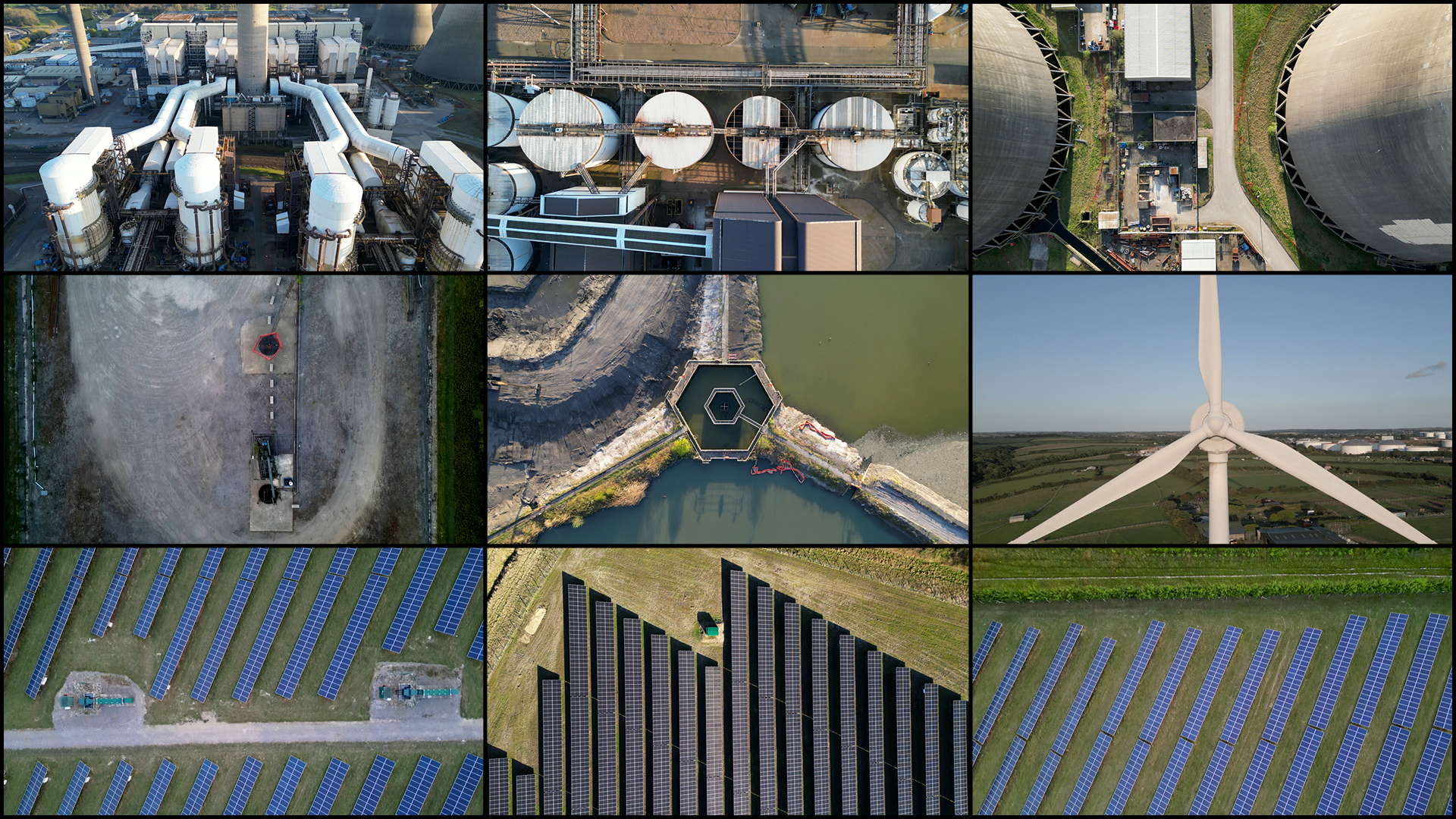

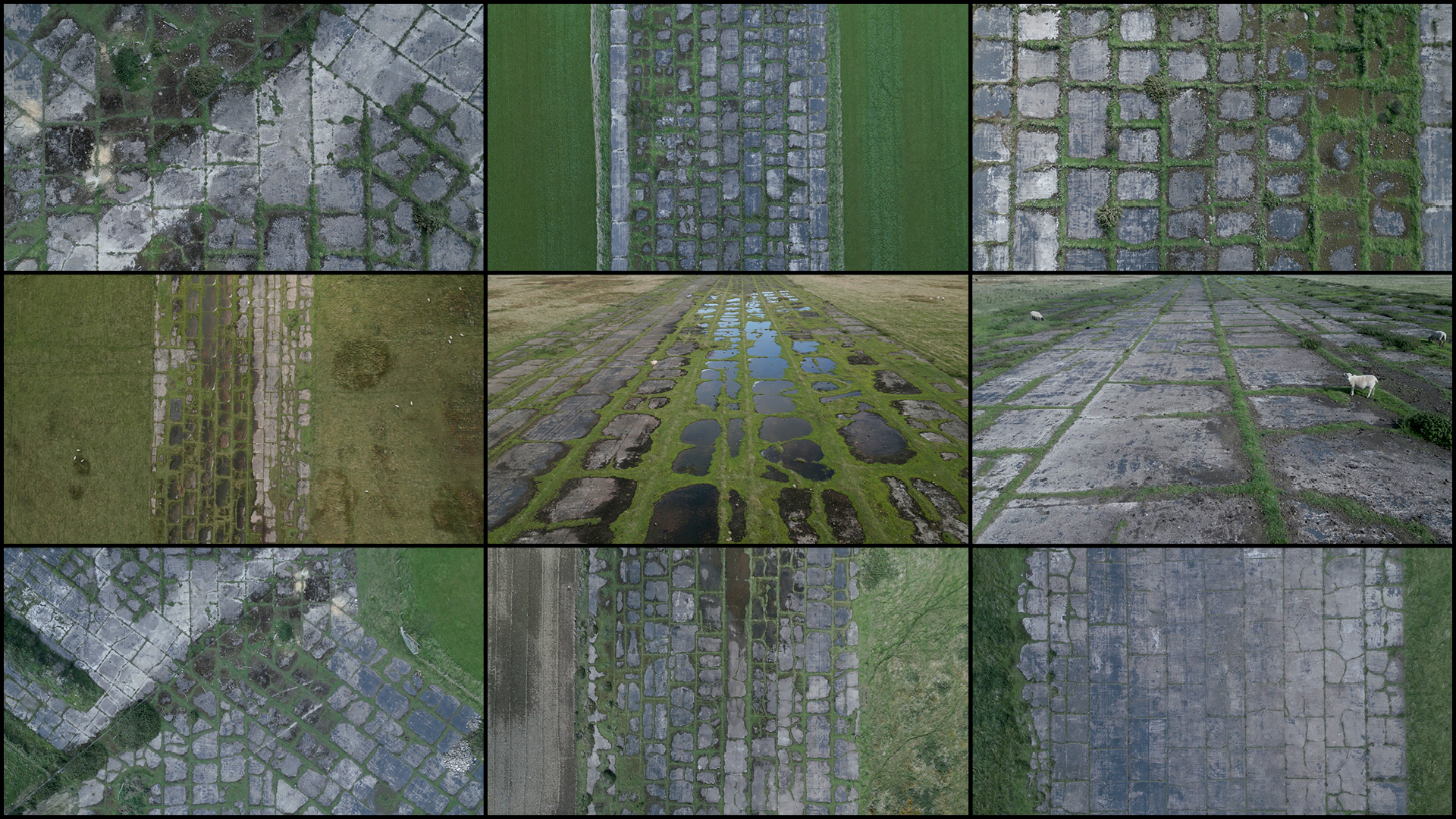

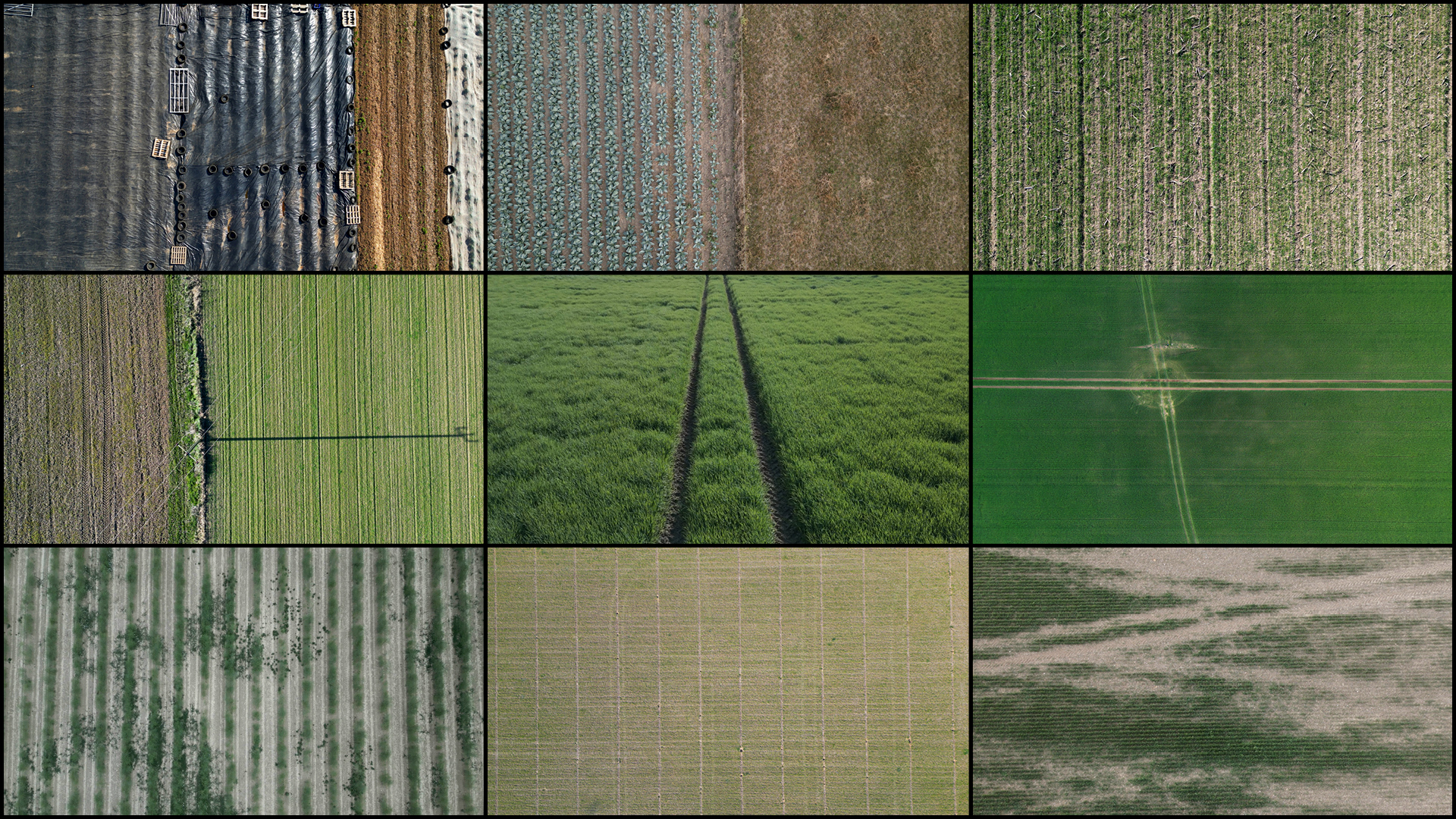

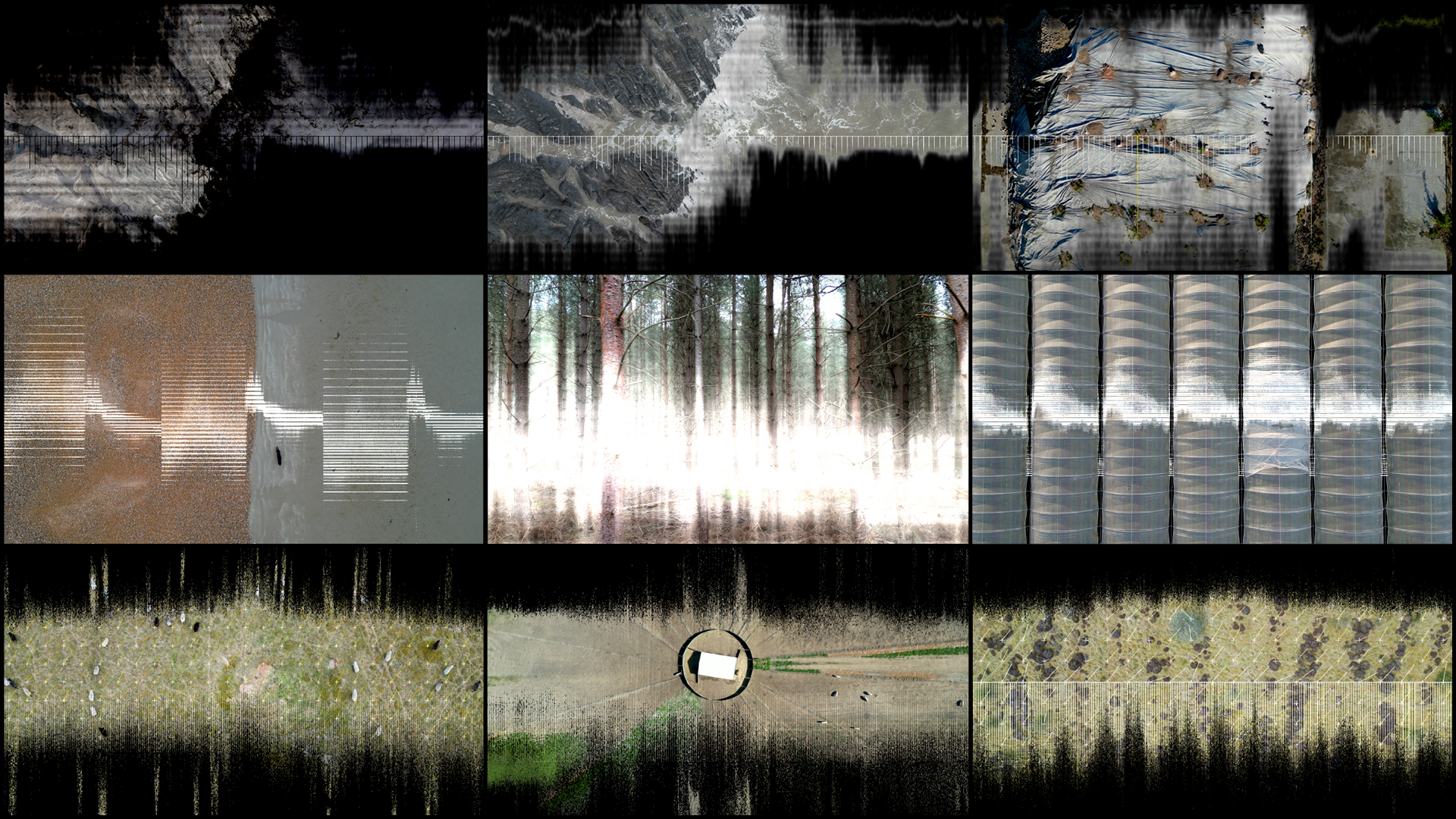

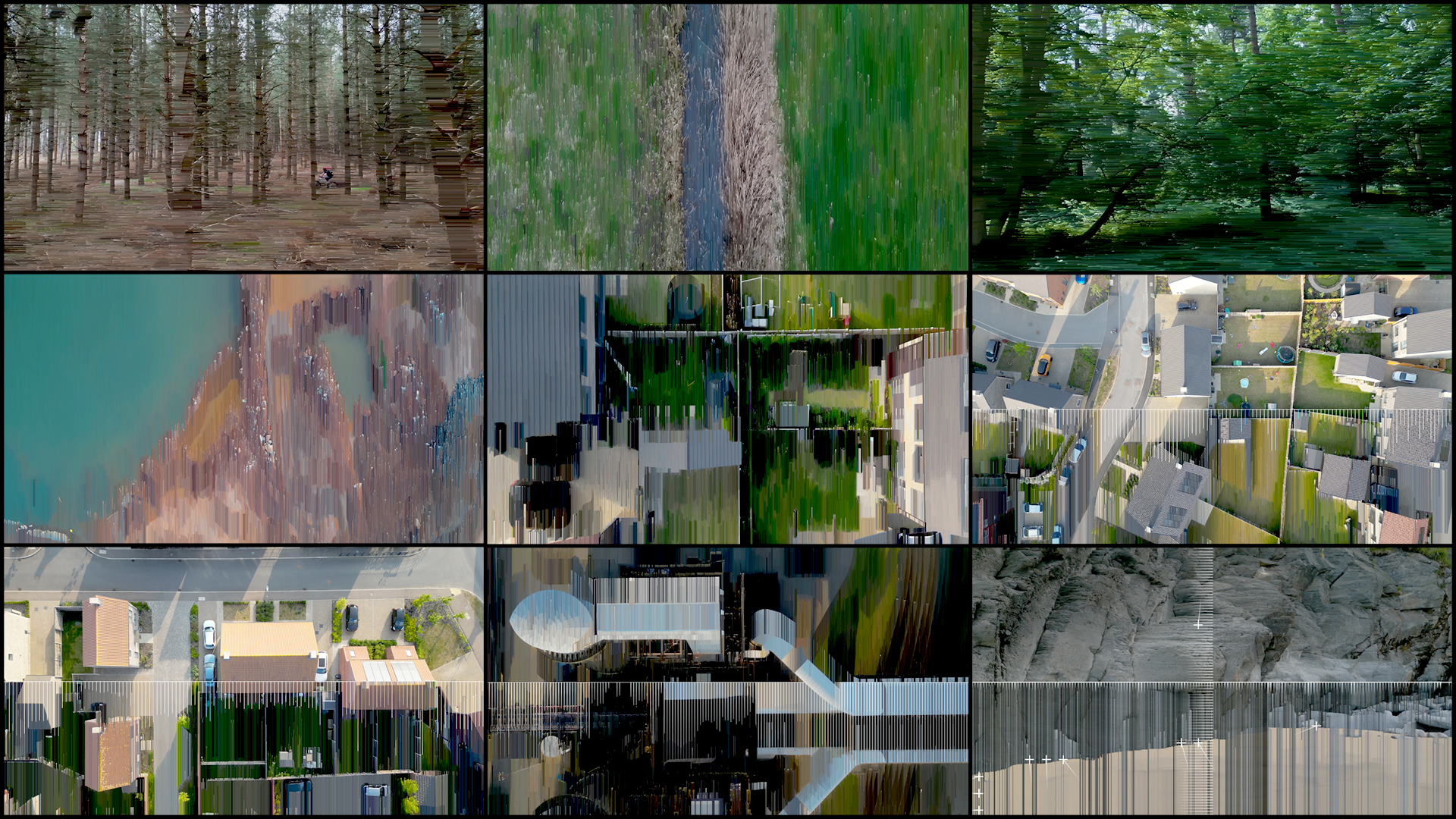

As part of this larger project, Allen focused on gathering of a large amount of aerial drone footage that he filmed himself across the UK between 2022 and 2025. This archive covers 12 distinct landscape typologies: coastal, geological, rivers, wetlands, greenfield, forests, agricultural, Earthworks, brownfield, industrial, transport, and suburban, with a large collection of new footage filmed in 2024 and 2025 in East Anglia and the Norfolk Broads.

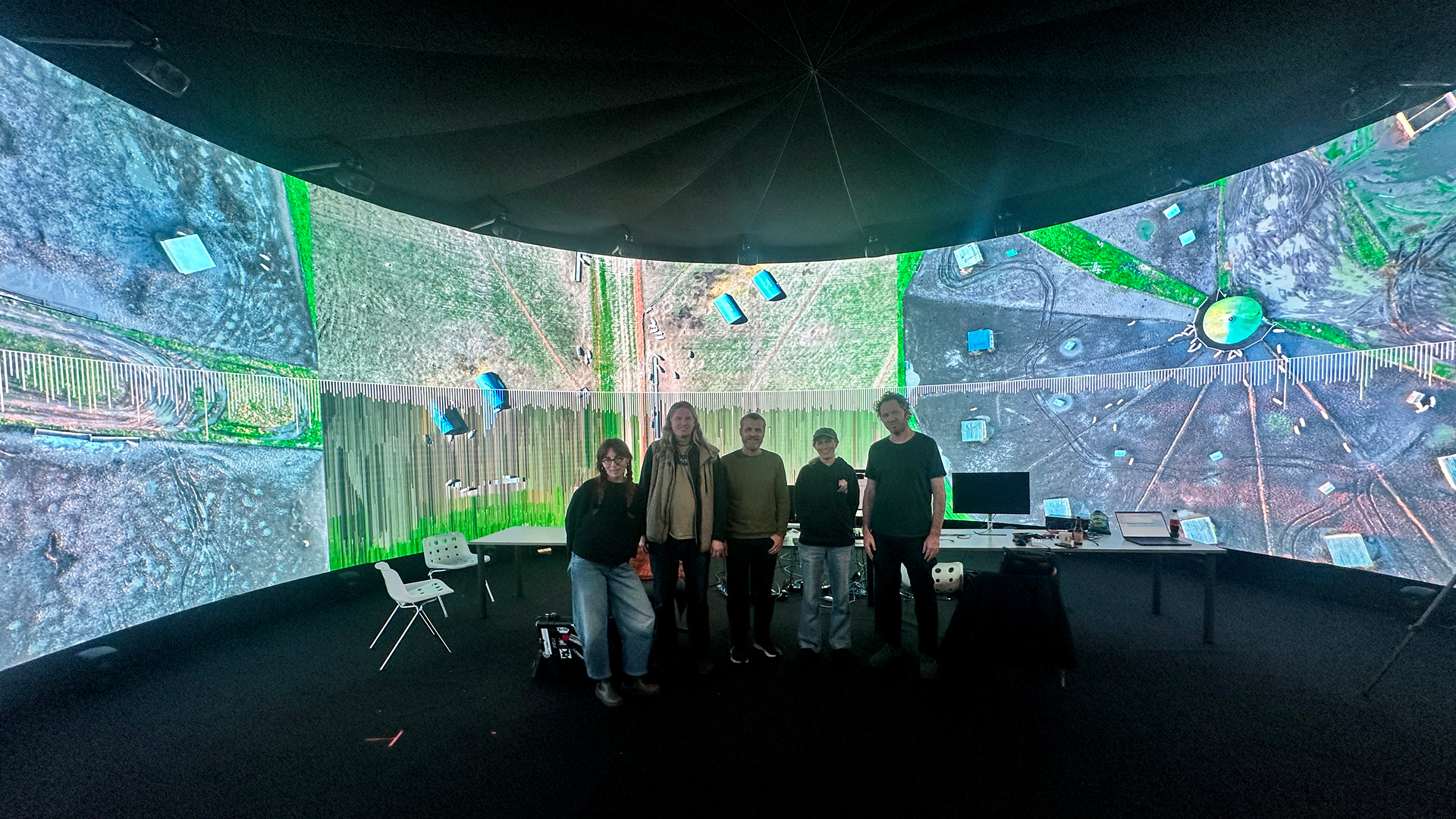

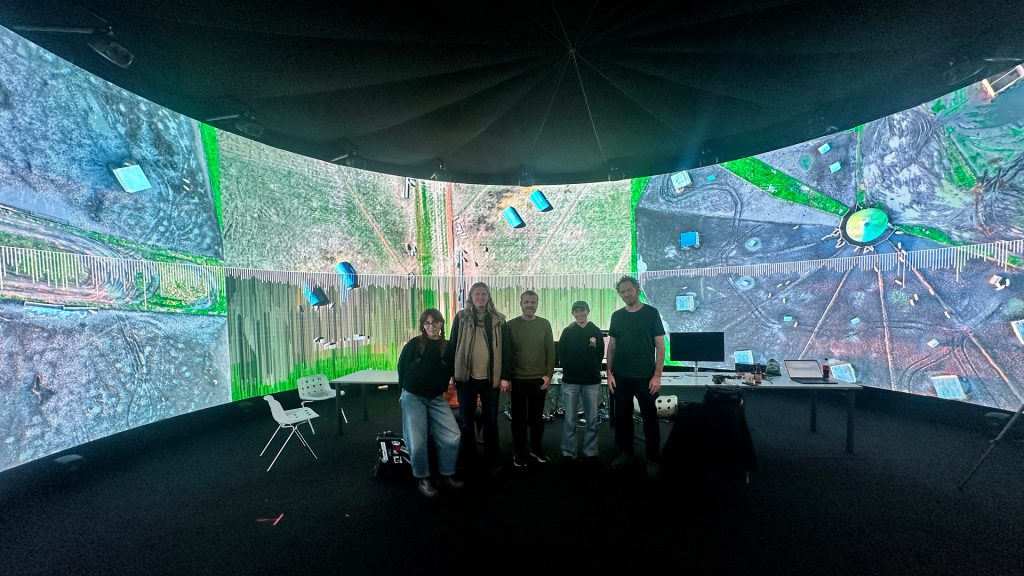

In 2025 project was developed further through a Research Fellowship in the Institute for Creative Technologies at Norwich University of the Arts. Allen invited long-time collaborator, artist and composer Tim Cowie into the work on this idea with him. Together, Allen and Cowie developed systems for the development of this sonification work. In late 2025, with some additional technical consultancy from Media Artist Marcus Lyall, they invited Touch Designer creative developer Cécile Lebon into the project to test ways to build custom plugins and send data from VDMX to Ableton.

During its testing in the IVSL space at NUA the project also received additional technical support from creative technologist Richard Hall and Roz Gardner from Collusion, as well as advice and support from Norwich University of the Arts senior Technician and lecturer in sound, Dr Philip Archer, on Max for Live and the cracking open of Meta-Synth plugins.

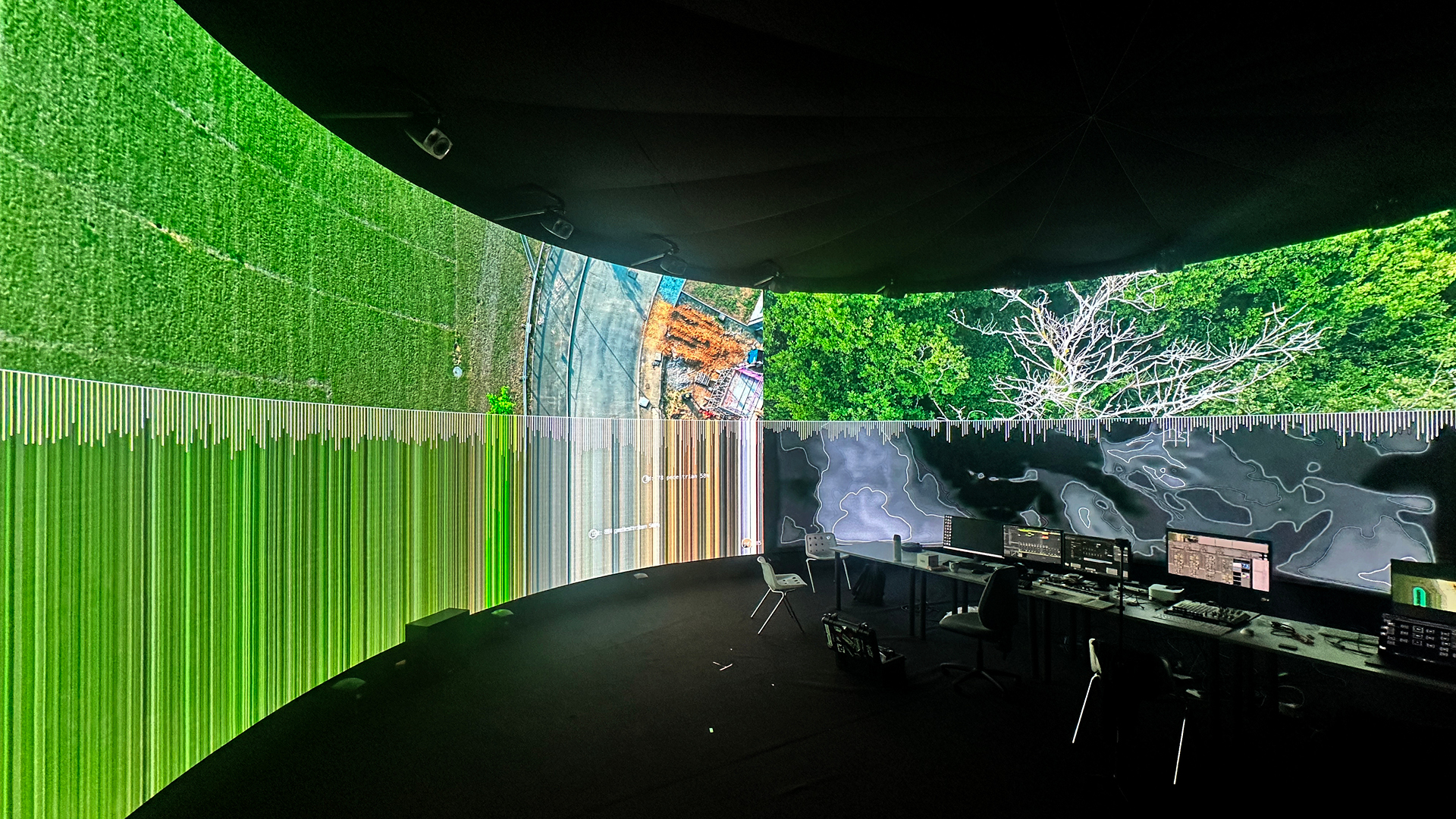

The collaborative, practice-based research the group undertook forms an investigation into how a video archive of landscape footage might be used to generate and affect musical compositions. They explored this through data-to-sound mapping, balancing quantitative pixel analysis with qualitative artistic interpretation, exploring how emotional responses might be evoked directly from this collection of visual material through a generative approach to sound design and real-time visual processing.

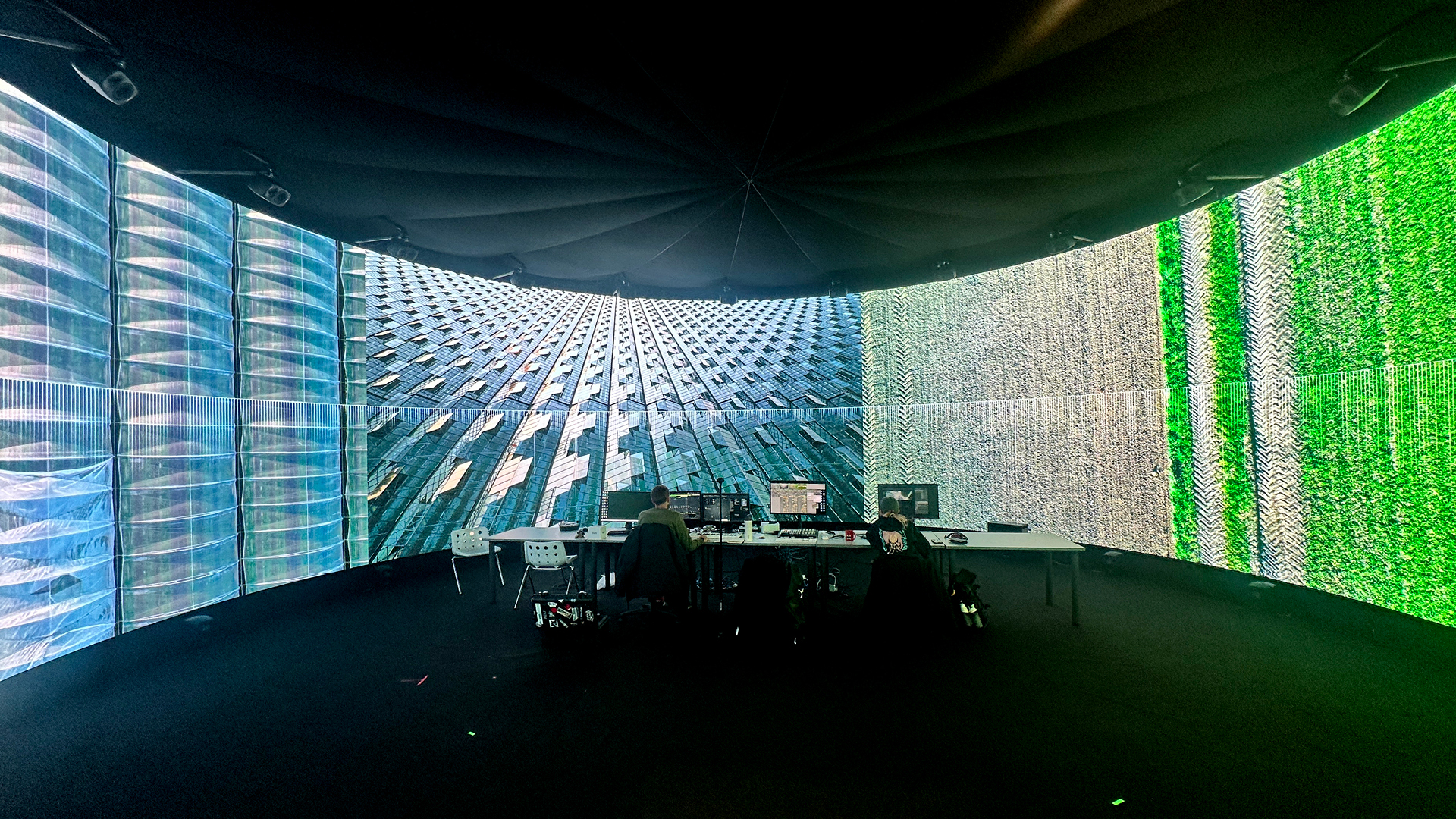

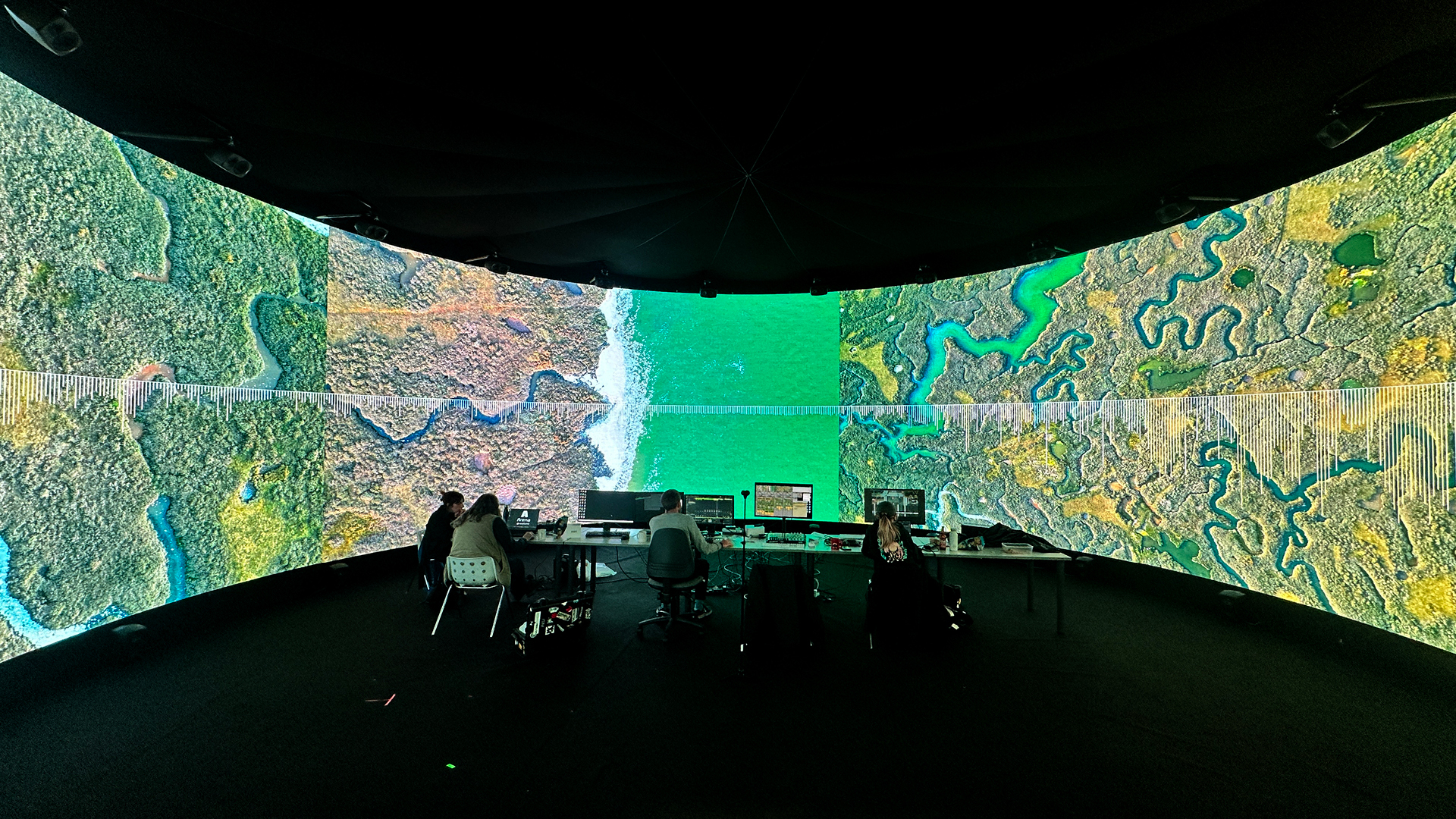

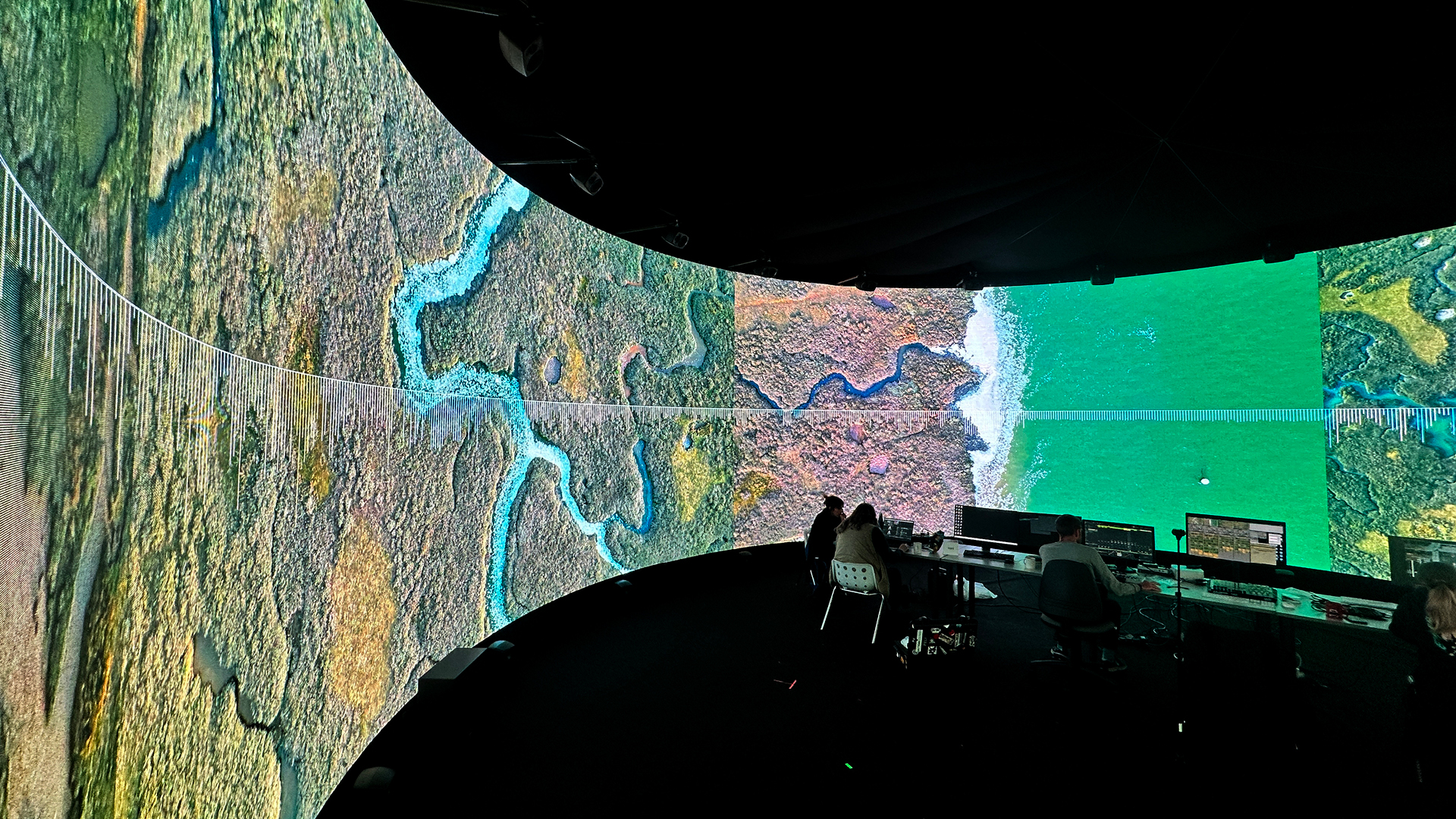

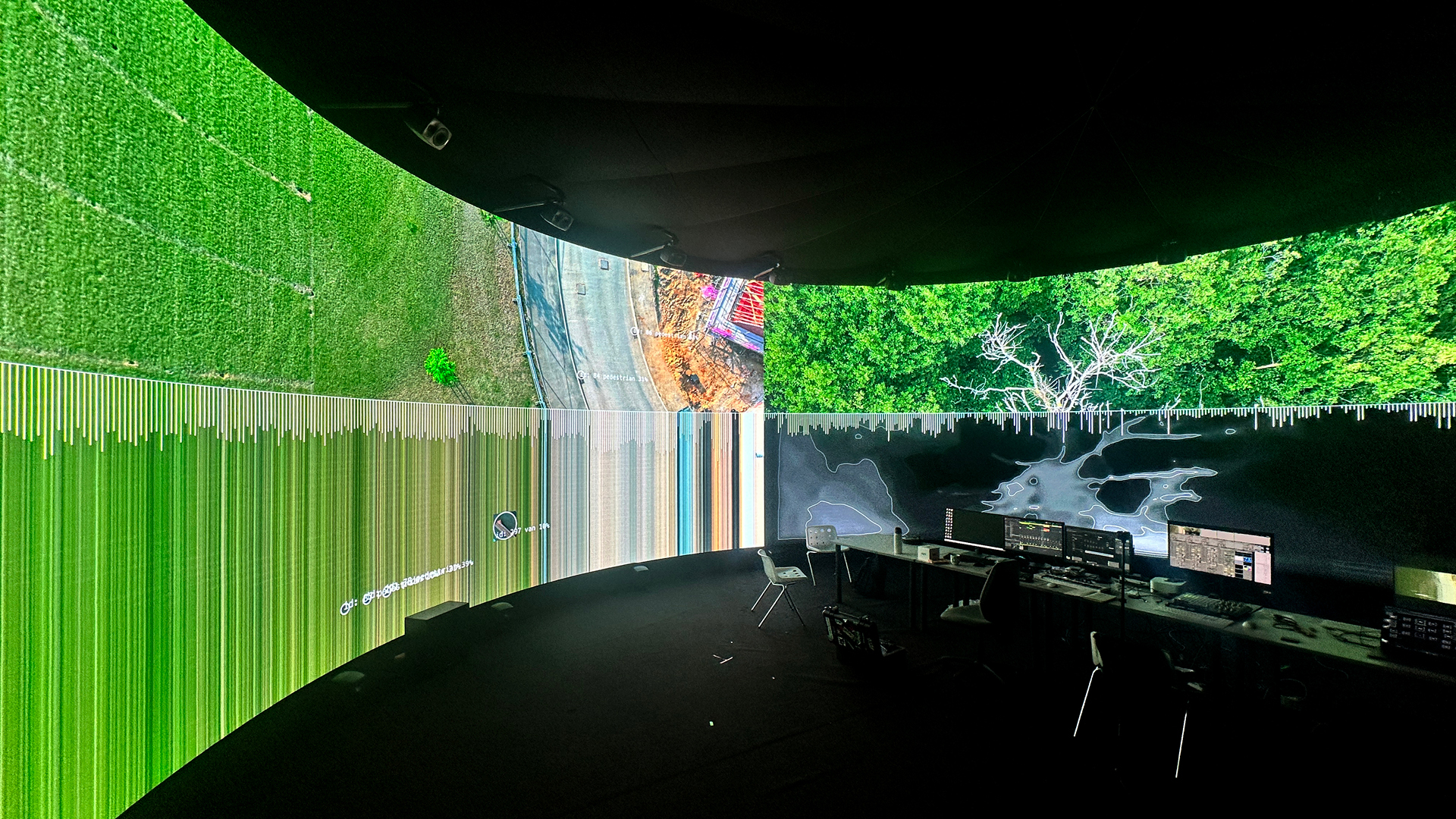

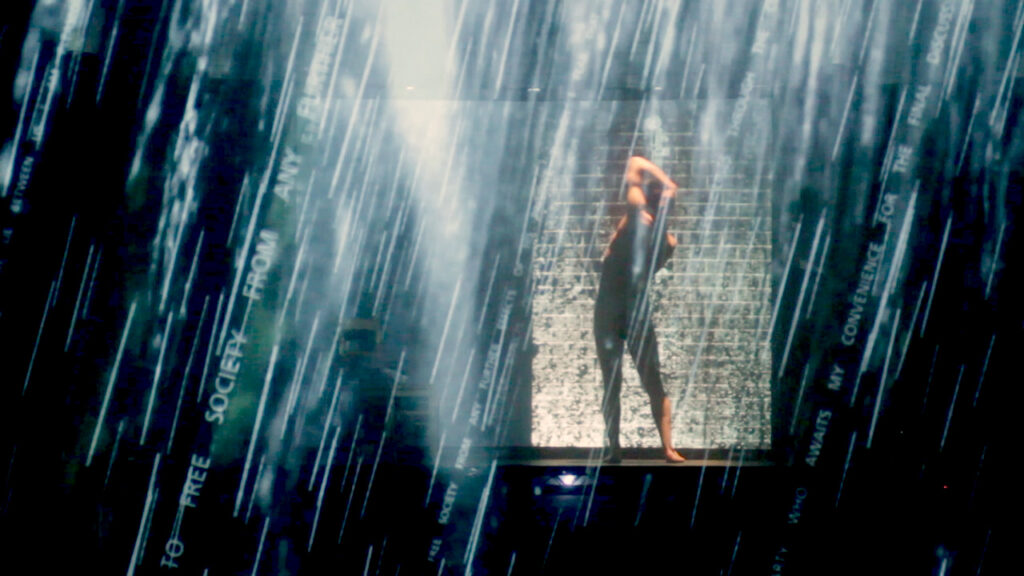

This research explored various digital approaches to forming new relationships between landscape and music in the IVSL space, intersecting with a set of questions: How might we explore the relationship between landscape and music in a generative manner? What might we discover about these different topologies by translating them into sound? What footage and musical interjections work on their own and in the immersive 360 audio setup in IVSL space? How might this sonification process be visually readable to the audience?

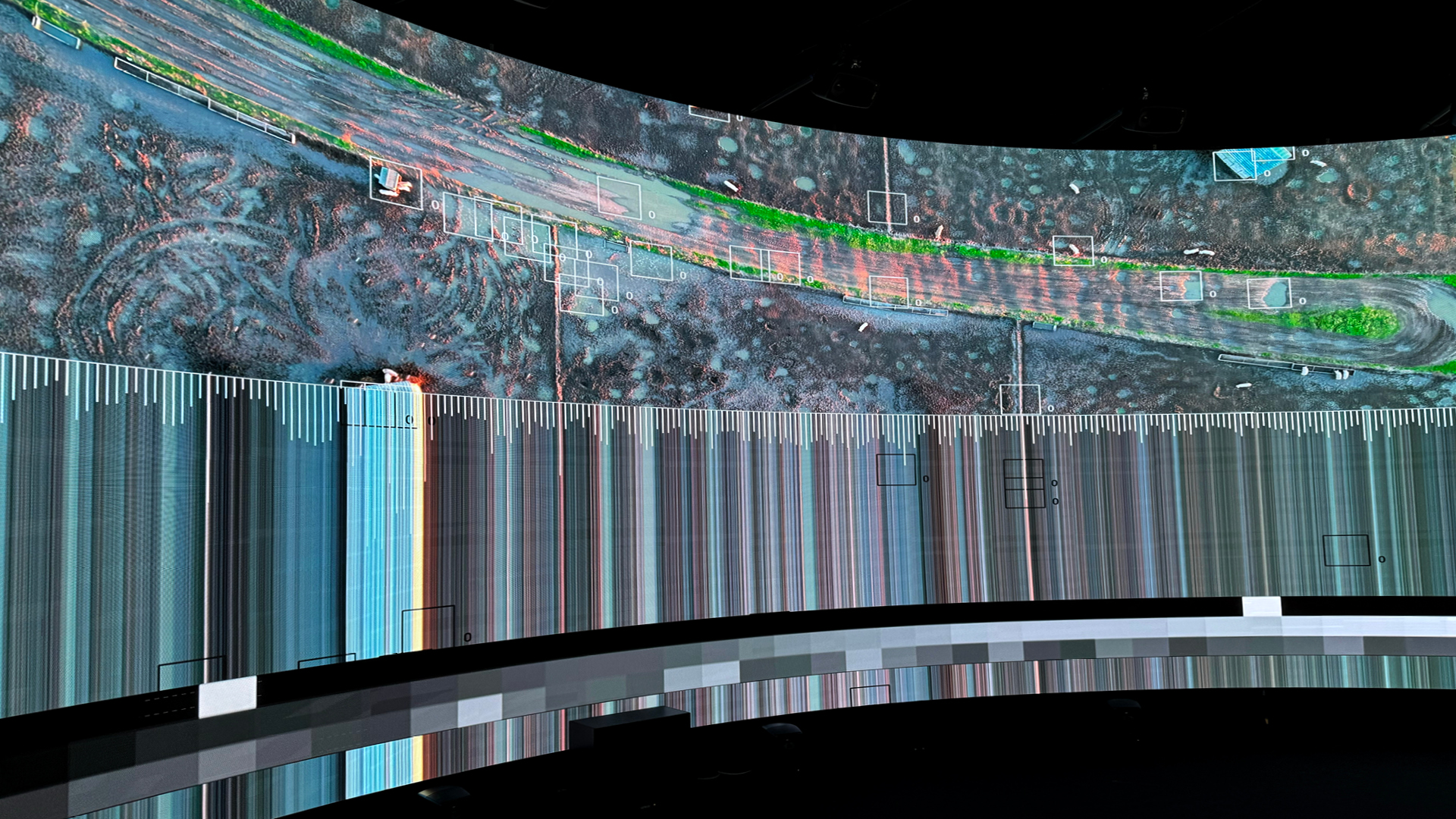

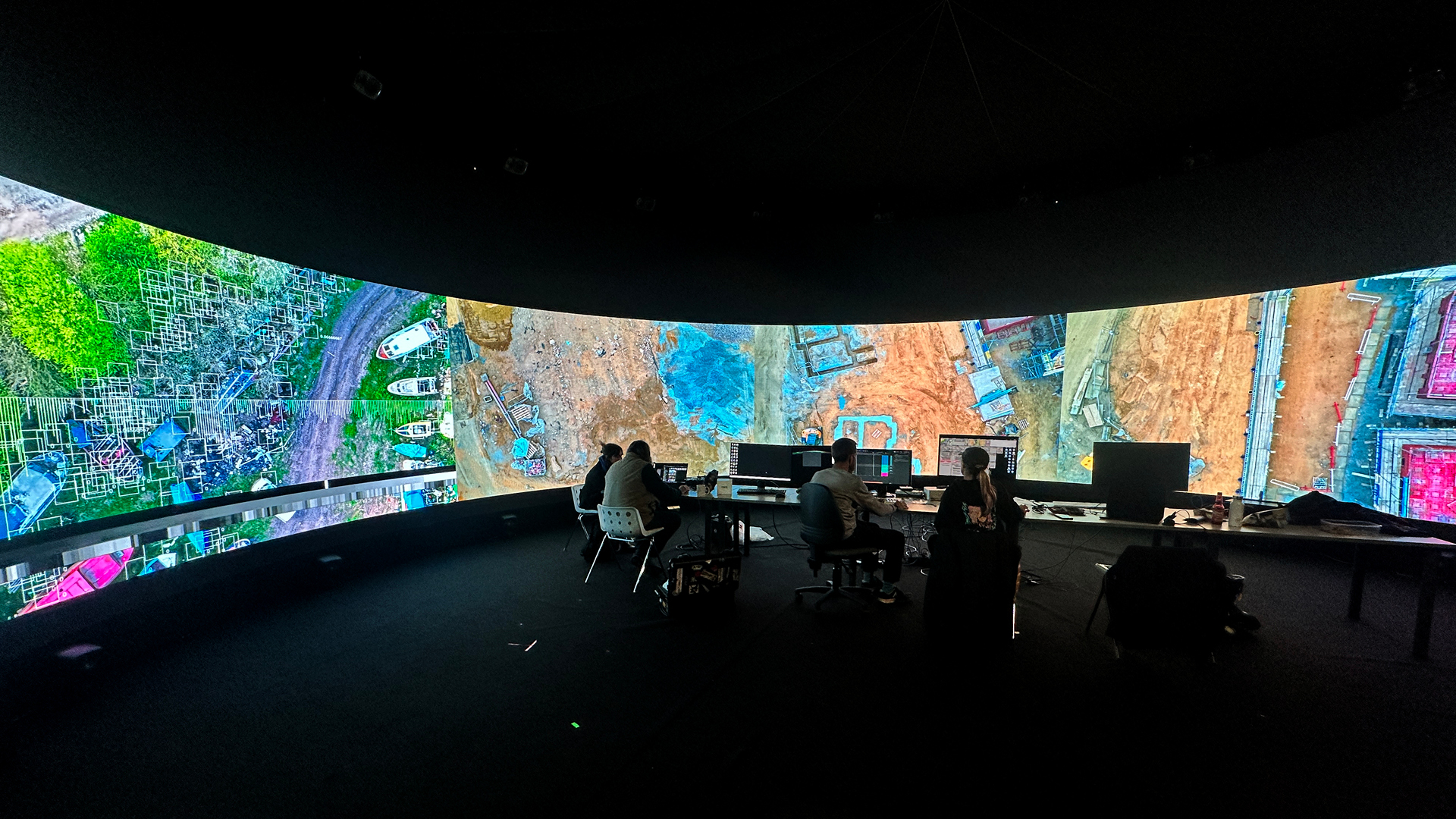

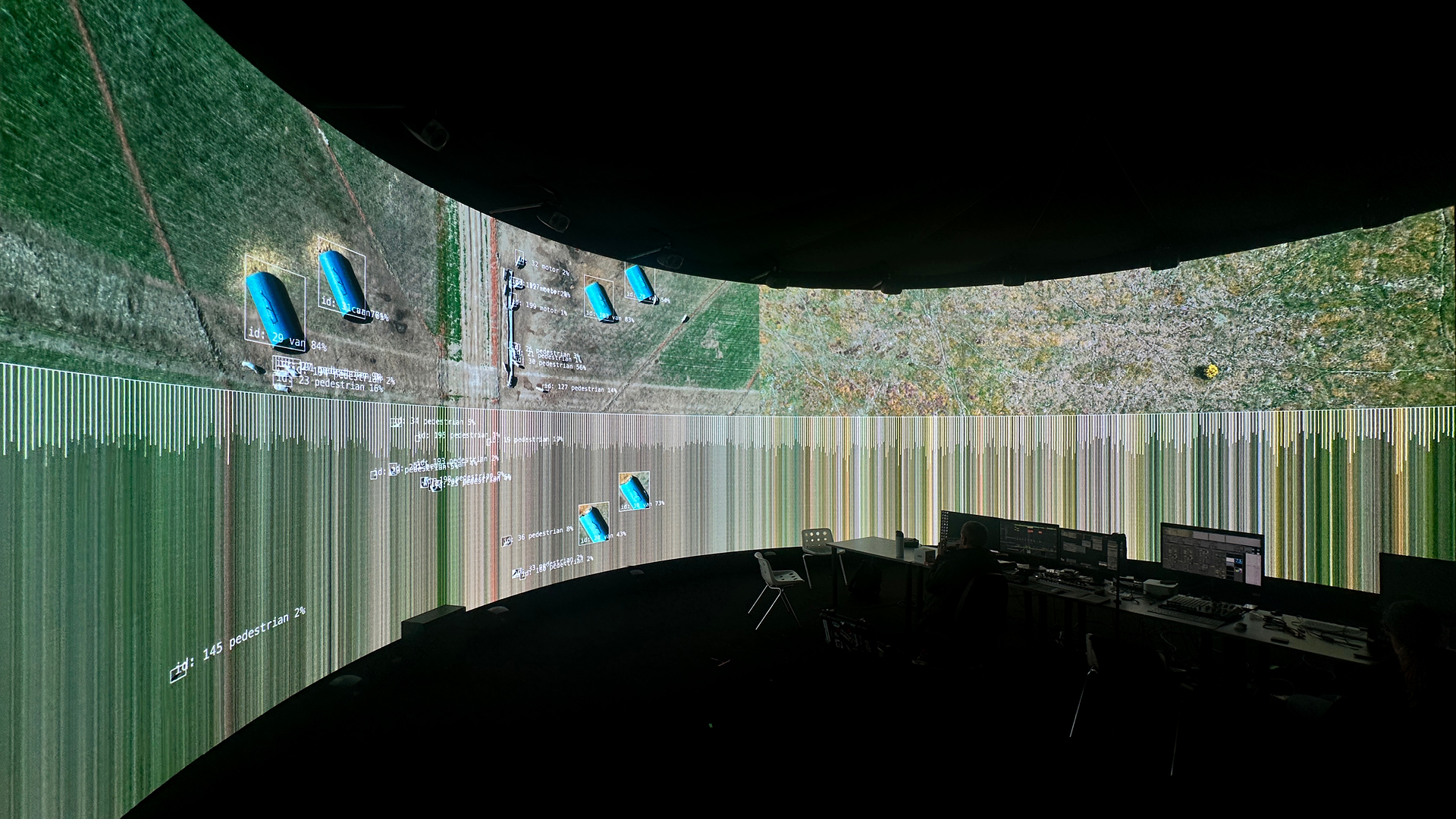

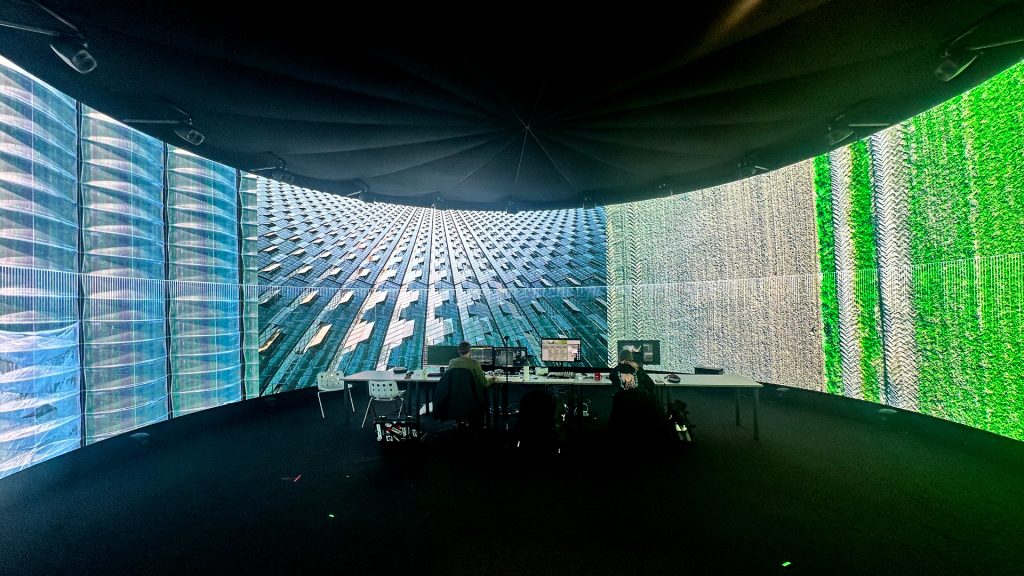

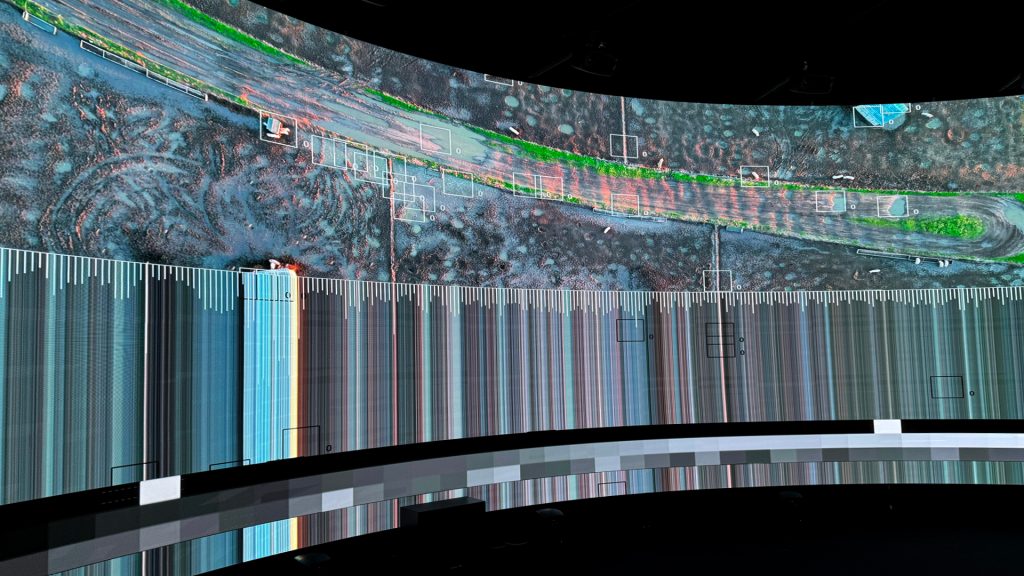

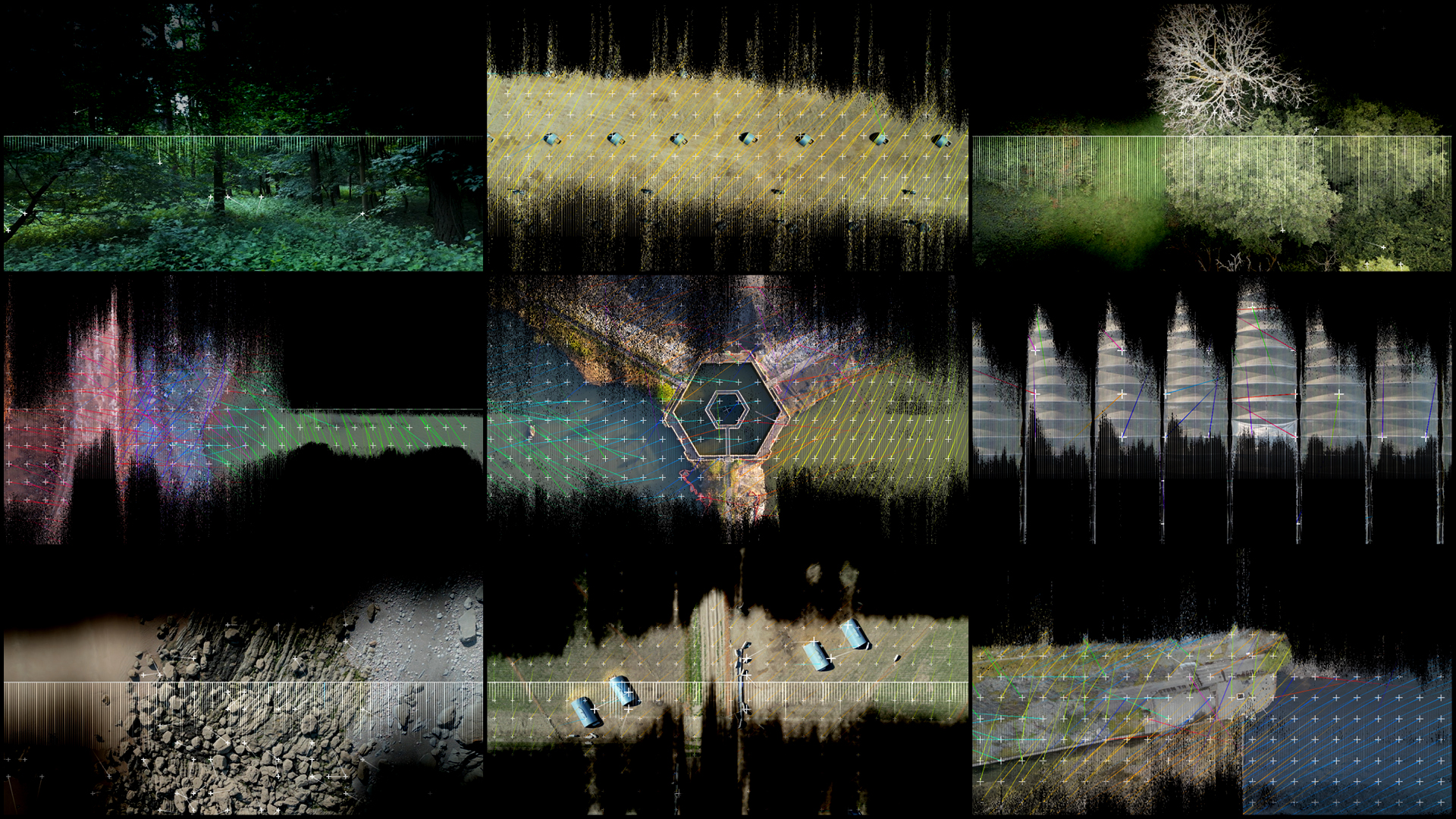

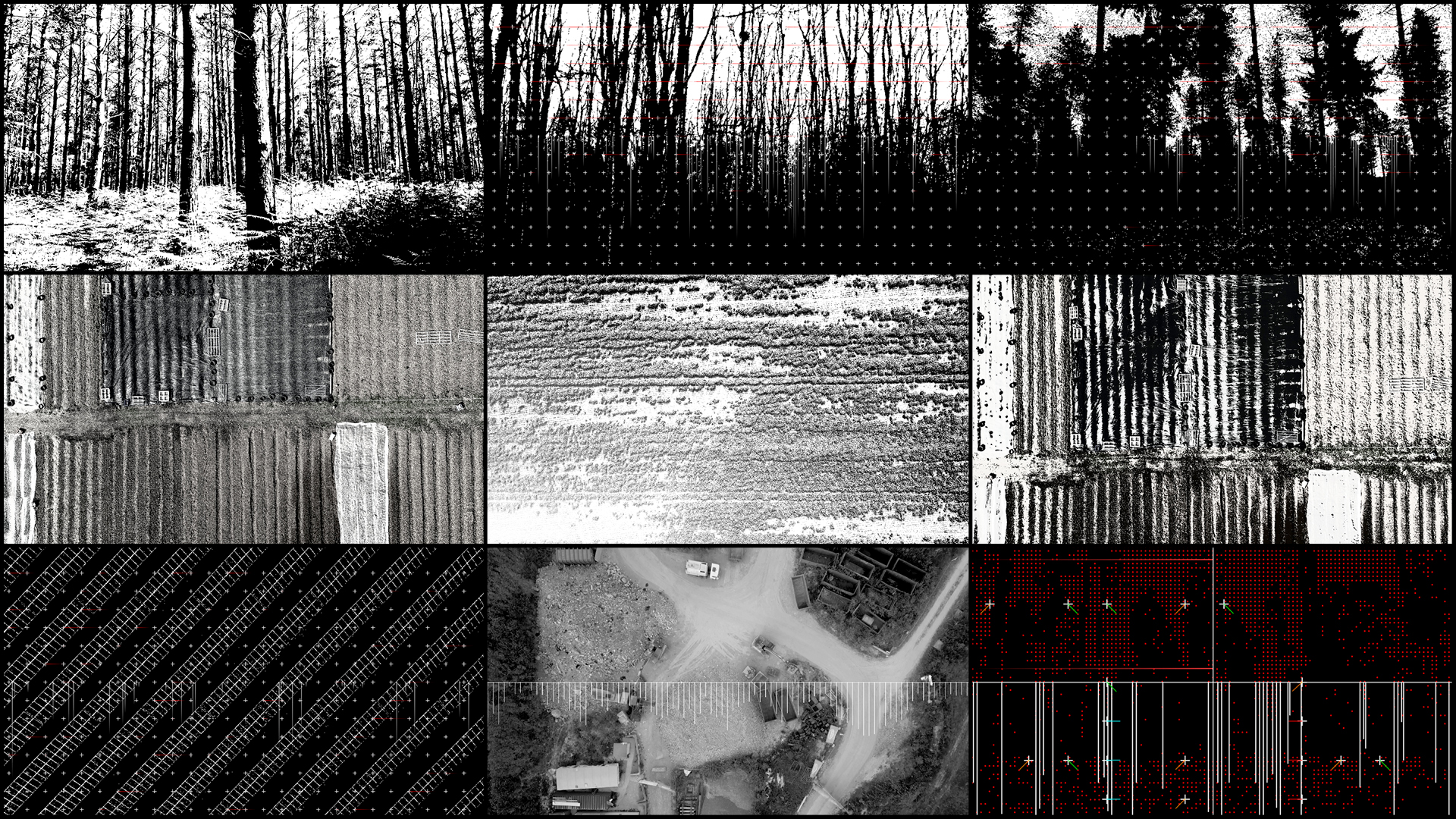

The methodology for this research was to explore how to integrate three different software platforms: VDMX, TouchDesigner and Ableton Live to create a pipeline where audio and visual feedback could be explored in the 360’ IVSL space in real-time. VDMX and TouchDesigner were used to process the aerial footage and to perform different techniques. This involved the implementation of pre-trained object detection AI models including depth mapping and blob tracking. A key aspect of the sonification is driven by the extraction of different real-time visual data (brightness, movement, colour temperature) that is sent as data to an Ableton. Both software platforms were also used to explore different visualisations of this process to give audience feedback. OSC/MIDI data streams from TouchDesigner were sent to Ableton, controlling both tonal beds (filtered using spectral analysis) and were also used to trigger solo instruments (activated by objects crossing central frame lines)

Research Insights:

Field research included the capturing of different ariel footage across a diverse range of UK landscapes and spaces, developing flying techniques which emphasised visual musicality in this silent footage.

Much of the creative preparation outside of the IVSL space focused on gathering this footage, editing footage and the technical discovery of combining the software together to create a workflow that could run on the large resolution and spatial audio system at the IVSL.

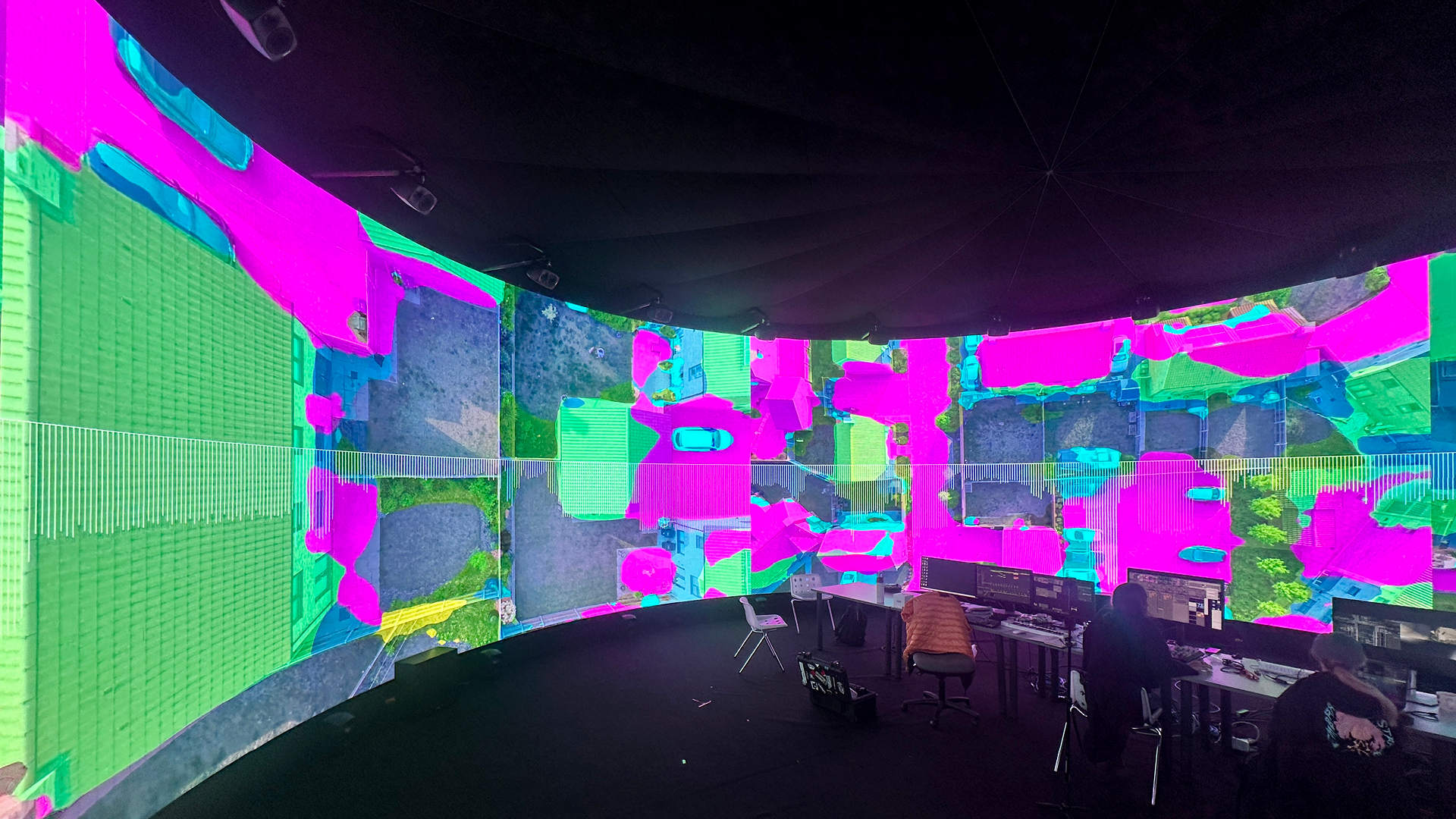

Research into multiple machine learning models was explored and evaluated for potential use during these sessions. Yolo Object Detection was explored within TouchDesigner, and Semantic Segmentation of aerial imagery was examined using a bespoke Python script to determine if these techniques could also be utilised to trigger and affect music.

The team also tested some pre-trained segmentation models on drone footage. The Thalirajesh/Aerial-Drone-Image-Segmentation model showed the most promise, particularly on suburban scenes. Video processing pipelines were developed to generate colour segmentation overlays with class distribution data per frame, led by Roz Gardner and Richard Hall at Collusion.

The creative context of this research reflects on a history of sonification, from 19th-century telegraph sounders to NASA’s astronomical data conversions, while positioning this work within the contemporary audio-visual practices of The Light Surgeons and other media arts practitioners. The research investigates relationships between signal and noise, order and chaos, as metaphors for humanity’s position within the natural world.

This research aims to break new ground between the visual arts and sonic arts, to find new ways for each to inform the other and to provide new pathways for the development of audio-visual art in immersive environments like the IVSL space.

The research opened up lots of new learning, using Claude AI to code shaders, integrate Touch Designer plugins and controls into VDMX. The team discovered how to build MaxMSP patches that used NDI video streams from VDMX and Touch Designer to drive musical compositions, and to root these audio and visual streams into the immersive IVSL system in real-time.

They discovered that pre-trained aerial segmentation models require a lot of fine-tuning for lower-altitude drone footage. The SegFormer architecture proved most stable for continuous video processing, while YOLO models produced visually compelling but unstable “glitchy” aesthetics.

The streaming of visual data into Ableton opened up some exciting new territory, uniting this learning with developed CV tools and fully fleshed out musical compositions will take more time. We are seeking opportunities to expand on this research and are hoping to explore different visual topologies, instrumental palettes, installation and performative forms of presentation of this work in a fully formed new audio-visual artwork in the near future.

Project Credits:

| Artistic Direction: | Christopher Thomas Allen |

| Sound Design & Compositon: | Tim Cowie |

| Touch Designer Developer: | Cécile Lebon |

| Technical Consultancy: | Marcus Lyall |

| Additional Programming: | Richard Hall & Roz Gardner |

| Drone Footage: | Christopher Thomas Allen |

Project Partners:

Norwich University of the Arts

Institute for Creative Technologies

Technical Support:

Thanks to:

David Lubin & Vidvox

Dr Philip Archer